Over the weekend, I was reading Anthropic’s paper “Emotion Concepts and their Function in a Large Language Model.” It’s a fascinating piece of work.

What struck me most, though, was a tension at the heart of it. On one hand, you have highly advanced scientific methods. On the other, a framework for thinking about emotion that feels oddly outdated. It’s a bit like applying modern medical tools to analyse the four humours.

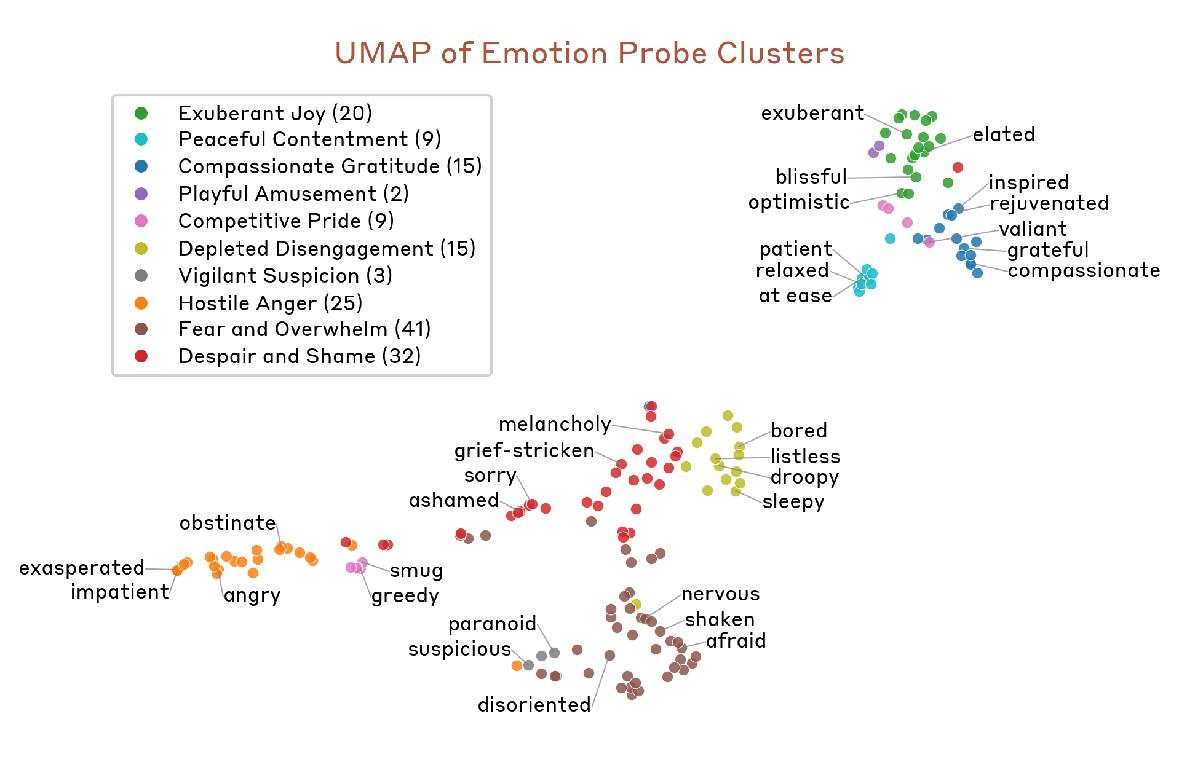

The paper shows that what we might call “AI emotions” or emotion vectors map surprisingly closely to human ones. They cluster in familiar ways, with related states sitting near each other in the model’s internal structure.

What that looks like in practice is a map where similar emotional states naturally group together. Exasperation sits near impatience, anxiety clusters with fear, calm states gather in their own region.

Even without understanding the underlying maths, the pattern is intuitive. Emotions that feel related to us are also represented as related inside the model and that’s the important part, because what this suggests is that the model isn’t just mimicking language on the surface. It’s organising internal representations in a way that mirrors how we structure meaning ourselves.

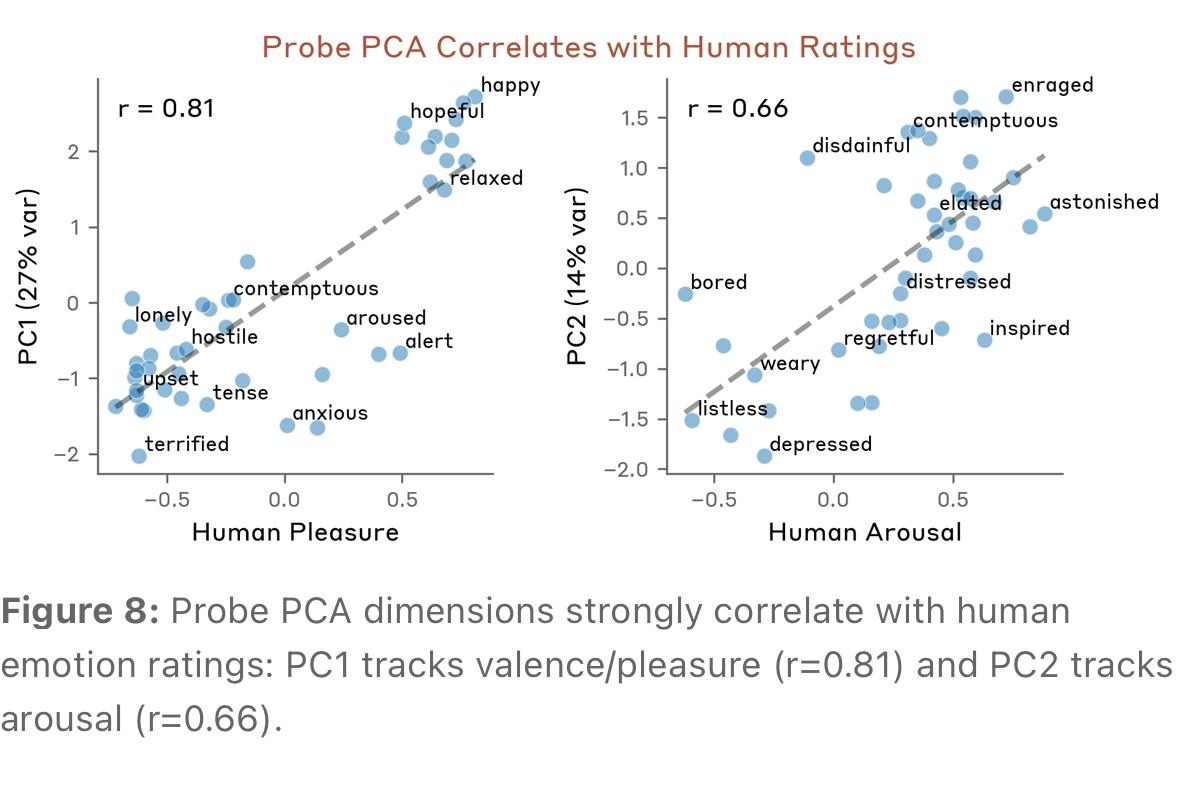

To take this a step further, the researchers didn’t just look at clustering. They also tested whether these internal representations align with how humans actually experience emotion.

What this shows is a clear correlation between how the model organises emotional states and how humans rate them.

One axis captures valence, how positive or negative something feels. The other captures arousal, how intense it is. As human ratings shift, the model’s internal structure shifts with them.

This isn’t just surface similarity, the underlying structure is aligned.

So how do we make sense of that?

One possibility is that we’re missing something fundamental and what if all concepts are, at their core, emotional? Not just anger or grief but also things like “table” or “chair.” If you analysed them in the same way you’d likely see similar patterns. The differences may be subtler but the structure would be the same.

This suggests the divide between “emotional” concepts and everything else may not really hold and yet we still separate thinking from feeling, reserving “emotion” for a narrow set of states. If the structure is shared, that distinction becomes harder to defend.

At a basic level, the mechanism is simple. Train a system on human expression and it will begin to mirror the patterns within it. The authors are careful not to overstate this. Human emotion is embodied, AI doesn’t “feel” in the same way but the structure is there. Models already show signs of maintaining separate representations for self and others, something close to a basic theory of mind.

Which leads to a more unsettling thought...human thinking is constrained by biology. We can only hold so much in mind, we feel pain, we fear death and we respond to others. That’s why psychopathy unsettles us as it loosens those constraints.

AI however is trained on human expression but not bound by human biology. If it begins to access patterns beyond what we’ve expressed, it may also form concepts beyond our frame of reference.

At a guess, that may already be happening...